- Blog

- Dizzee Rascal Maths English Zip Codes

- Descargar Musica De Joan Sebastian Mi Complice Letra

- Bullet For My Valentine Tears Dont Fall Mp3 320 Kbps Download

- Starbyte Super Soccer Pc Simulation

- Avast Antivirus 2009 Myegy Games Gta

- Film Goodbye Mr Cool Subtitle Indonesia Goblin Episode

- Battle For Middle Earth 2 Crack Torrent

- Huawei S7 701u Deadpool

- Prince Of Persia 2008 Pc Patch Fr Skyrim

- Psicopatologia Uma Abordagem Integrada Pdf Editor

- Rudall Carte Serial Numbers Flute Instrumentals

- Winning Eleven 2000 Psx Iso For Psp

- Cisco Mds Switch Simulator Cisco

- Beginners Guide To Install Windows 10 With Ubuntu Iso

- Battle Cry Angel Haze Mp3 Download

- Linux Software Raid Vs Hardware Raid Versus

- Wwe 2k15 Pc Mods Smacktalks

- Jodi Arias Bikini Models

- Anime Bakugan Sub Indo Batch Brewing

- Nuance Vocalizer Studio

- Blaupunkt Cd30 Keygen Generator Online

- Filme A Selva 2002 Com Maitê Proença

- Bully Scholarship Edition Xpadder Profile For Elder

- Huawei Switch Serial Number Command Line

- Metal Slug 7 Rom Mame Recalbox Pc

- Drivers Gigabyte Motherboards

- Chord Wiro Sableng Bondan Prakoso

- Contoh Program Kasir Dengan Php Tutorial Pdf

- Netflix Cracker Working

- Akvis sketch older version

- Forza horizon 2 pc release date

- Castle miner z creative mode

- Rise of the tomb raider mods xbox 360

- Rosetta stone activation code generator german

- Watch mobile suit gundam 0079 online free

- Ivona salli torrent

- The forest wiki pedi

- Nrf24l01 codevision

- Ufc undisputed 3 controls ps3

- Bricscad linux

- Gaki no tsukai cast

- Day of infamy pc

I, for one, also think that the article summary is too modest. Linux MD RAID is far superior to the hardware solutions not only for the reasons written above, but also for its performance. According to my experience, even the most expensive solutions of the time (including the DAC960 SCSI-to-SCSI bridges and, to some extent, 3ware HW RAID cards), the performance of the HW RAID sucks. The typical HW RAID controller happily accept your write requests, and stall the later read request, manifesting a similar behaviour to the networking bufferbloat. On the other hand, the kernel is aware of the requests that are being waited upon, and can prioritise the requests accordingly. Also, the kernel can use the whole RAM as a cache, while the HW RAID controller cache is much more expensive per byte, and usually unobtainable in sizes similar to the RAM of the modern computers. For me, 'HW RAID' is a bad joke.

JBOD with Linux MD RAID is much better. ( to post comments). I am not sure what do you mean by 'reliability and rubustness at the system level': sure, battery backed RAM is and advantage (unless the MD journal reaches the end-user kernels). But I had lots of stories where HW RAID failed for bizzare reasons such as replacing a failed drive with a vendor-provided one, which has not been erased beforehand, and which destroyed the rest of the configuration of the whole array, because the controllers in the array thought that the configuration stored on the replaced drive was for some reason newer than the configuration stored on the rest of the drives in the array. So no, my exprerience tells that the reliability and robustness is on the Linux MD RAID side.

This is one area where our experiences differ. In fifteen years of using 3Ware RAID cards, for example, I've never had a single controller-induced failure, or data loss that wasn't the result of blatant operator error (or multiple drive failure.) My experience with the DAC960/1100 series was similar (though I did once have a controller fail; no data loss once it was swapped). I've even performed your described failure scenario multiple times. Even in the day of PATA/PSCSI, hot-swapping (and hot spares) just worked with those things.

(3Ware cards, the DAC family, and a couple of the Dell PERC adapters were the only ones I had good experiences with; the rest were varying degrees of WTF-to-outright-horror. Granted, my experience now about five years out of date.) Meanwhile, The supermicro-based server next to me actually.locked up. two days ago when I attempted to swap a failing eSATA-attached drive used for backups. But my specific comment about robustness is that you can easily end up with an unbootable system if the wrong drive fails on an MDRAID array that contains /boot. And if you don't put /boot on an array, you end up in the same position. (to work around this, I traditionally put /boot on a PATA CF or USB stick, which I regularly imaged and backed up so I could immediately swap in a replacement) FWIW I retired the last of those MDRAID systems about six months ago.

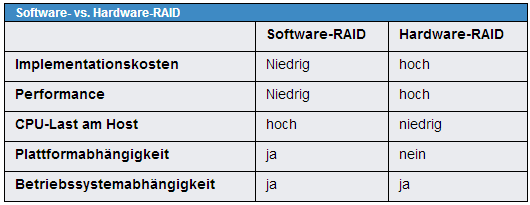

Hardware RAID vs. Software RAID: Which Implementation is Best for my Application? ROM.Additionally, hardware-assisted software RAID usually comes with a variety of drivers for the most popular operating systems, and therefore, is more OS independent than pure software RAID.